Eggplant AI is a single-screen web app that uses learning algorithms to auto-generate tests. The model-based approach of Eggplant AI makes it easier to develop tests for dynamically generated content, like websites and mobile apps. Modeling shifts the focus of testing from basic code compliance to the overall user experience.

Automated testing currently automates the execution of manually written tests. Eggplant AI creates the tests themselves from a model of the application you're testing, and integrates with Eggplant Functional to execute those tests. Creating tests using learning algorithms means that more user journeys can be tested, including those that no human tester would come up with.

Why Use Eggplant AI?

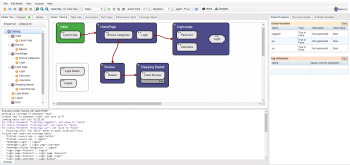

As a user, all you need to do to generate tests is build a simple model of the interface that you want to test. Eggplant AI applies AI reasoning to auto generate the test cases based on your model. Models replicate different states, representing pages or screens that users visit, and the actions users might perform within those states or that move users between states.

When you run a model, Eggplant AI generates tests by traversing possible user journeys through the model. Those user journeys can be used to build tests using a core library of shared actions. A simple model of an application can generate millions of test scenarios.

Increase Coverage through Automated Exploratory Testing

Eggplant AI tests what users can do, not just what testers think they should do. Learning algorithms enable Eggplant AI to explore the full range of potential user journeys and cover more states of your website or app than human testers can.

Testers define the connections between items in the model, and can define the weight that actions carry. Weights affect the Eggplant AI algorithms that auto-generate test cases. A more heavily weighted action will occur more frequently than a less heavily weighted action. Testers can also add test cases to the model. This increases the probability that the path selection algorithms in Eggplant AI will hit those cases.

While a model might look like a flowchart, it's not. It's more like a road map, and the user journeys Eggplant AI follows during a test run are defined on the fly. Eggplant AI algorithms select the best actions to run. This flexibility makes it easier to test dynamically generated content.

Integration with Eggplant Functional

You connect Eggplant AI with Eggplant Functional to automate test runs. Eggplant AI uses your model to generate the top-level flow of a user journey. You can translate that flow into executable steps in Eggplant Functional using short scripts called snippets. With snippets, you can build a library of actions to test an entire application, not just one given scenario.

You can set up your system so that when you run an Eggplant AI model, associated Eggplant Functional snippets execute as their related actions run in the model. You define the connections between actions and snippets.

Note that you must install the Eggplant AI Agent to integrate with Eggplant Functional.

Integration with Eggplant Performance

You configure Eggplant AI with the Eggplant Performance Test Controller to automate performance tests. Eggplant AI uses Eggplant Performance and Eggplant's very own hosted platform to carry out the performance testing behind the scenes. This integration involves setting up of engines for parallel execution and the systems to run the tests on SUTs; however, you as a user don't need to manually configure the systems. Running load tests in Eggplant AI is extremely simple, which means you can run it without any technical knowledge or prior coding experience. For more information on performance testing using Eggplant AI, see Running Performance Tests.

Next Steps

To start using Eggplant AI, follow these steps: